Jwala Dhamala

I am a Senior Scientist at Amazon AGI, California. My research focuses on advancing Artificial Intelligence through the development of large language models, agentic models, and reasoning models that are helpful, capable, and safe. My specific interests include benchmark curation, the design of robust evaluation metrics, and the evaluation of models to assess their alignment with responsible AI policies. I am also engaged in uncovering model vulnerabilities through novel jailbreak attacks and red-teaming methodologies. I am interested in developing agentic systems and LLMs for applications such as healthcare and others.

Prior to joining Amazon, I completed my Ph.D. in Computing and Information Sciences at the Rochester Institute of Technology (RIT), where I worked under the supervision of Dr. Linwei Wang in the Computational Biomedicine Lab. My doctoral research centered on personalization and uncertainty quantification in multi-scale 3D simulation models of cardiac electrophysiology. This work allowed me to operate at the intersection of machine learning—specifically Bayesian modeling, optimization, generative modeling, and graph convolutional networks—and computational healthcare, with a focus on personalized cardiac modeling.

I am always open to research collaborations in areas related to AI safety, model evaluation, and trustworthy machine learning. Feel free to reach out at jwala [dot] dhamala [at] gmail [dot] com if you are interested in collaborating.

News

- July 2026 NEW Co-organizing the 6th TrustNLP Workshop at ACL 2026 in San Diego.

- 2026 NEW LH-Deception on LLM deceptive behaviors in long-horizon interactions accepted at ICLR 2026.

- 2025 NEW Paper on best practices for agentic benchmarks accepted at NeurIPS 2025 Datasets and Benchmarks Track.

- 2025 NEW Released the Amazon Nova Technical Report.

- May 2025 Organized TrustNLP workshop at NAACL 2025.

- 2024 Paper led by our intern Elan on zero-shot reasoning with knowledge graphs accepted at ACL.

- 2023 Paper led by our intern Nina accepted at ACL.

- 2023 Paper led by our intern Elia accepted at ACM FAccT 2023.

- May 2022 Three papers accepted at ACL 2022: [1] [2] [3].

- May 2022 Organized TrustNLP workshop at NAACL 2022.

- Oct 2021 Presented at the WeCNLP Summit.

- July 2021 Organized Responsible AI workshop at KDD 2021.

- May 2021 Organized TrustNLP at NAACL 2021.

- Jan 2021 Paper on bias in open-ended generation accepted at ACM FAccT. Dataset: BOLD

Earlier News (2020 and before)

- Dec 2020Panelist on AI fairness discussion at NeurIPS 2020: Watch here.

- Oct 2020Paper with intern Ansel accepted at EMNLP workshop.

- Feb 2020Paper accepted at Medical Image Analysis.

- Feb 2020Successfully defended PhD: Thesis.

- Dec 2019Joined Amazon Alexa NU-AI as Research Scientist.

- Jun 2019Paper finalist for MICCAI Young Scientist Award.

- Oct 2018Paper accepted at IEEE Sensors Letters and NeurIPS ML4H.

- Oct 2018Abstract accepted to WiML Workshop 2018.

- Sep 2018Paper finalist for MICCAI 2018 Young Scientist Award.

Selected Publications

For a comprehensive list of my publications, please visit my Google Scholar profile.

Conference Publications

-

The Amazon Nova Family of Models: Technical Report and Model CardarXiv, 2025

-

Establishing Best Practices for Building Rigorous Agentic BenchmarksNeurIPS Datasets and Benchmarks Track, 2025

-

LH-Deception: Simulating and Understanding LLM Deceptive Behaviors in Long-Horizon InteractionsICLR, 2026

-

MICo: Preventative Detoxification of Large Language Models through Inhibition ControlNAACL Findings, 2024

-

Tokenization Matters: Navigating Data-Scarce Tokenization for Gender Inclusive Language TechnologiesNAACL Findings, 2024

-

Tree-of-Traversals: A Zero-Shot Reasoning Algorithm for Augmenting Black-box Language Models with Knowledge GraphsACL, 2024

-

“I’m fully who I am”: Towards Centering Transgender and Non-Binary Voices to Measure Biases in Open Language GenerationFAccT, 2023

-

Resolving Ambiguities in Text-to-Image Generative ModelsACL, 2023

-

Mitigating Gender Bias in Distilled Language Models via Counterfactual Role ReversalACL Findings, 2022

-

On the Intrinsic and Extrinsic Fairness Evaluation Metrics for Contextualized Language RepresentationsACL, 2022

-

BOLD: Dataset and Metrics for Measuring Biases in Open-Ended Language GenerationACM FAccT, 2021

-

Bayesian Optimization on Large Graphs via a Graph Convolutional Generative Model: Application in Cardiac Model PersonalizationMICCAI, 2019

-

High-dimensional Bayesian Optimization of Personalized Cardiac Model Parameters via an Embedded Generative ModelMICCAI, 2018

-

Quantifying the Uncertainty in Model Parameters using Gaussian Process-Based Markov Chain Monte Carlo: An Application to Cardiac Electrophysiological ModelsIPMI, 2017

-

Spatially-Adaptive Multi-scale Optimization for Local Parameter Estimation: Application in Cardiac Electrophysiological ModelsMICCAI, 2016

Journal Publications (PhD Research)

-

Embedding High-dimensional Bayesian Optimization via Generative Modeling: Parameter Personalization of Cardiac Electrophysiological ModelsMedical Image Analysis (MedIA), 2020

-

Multivariate Time-series Similarity Assessment via Unsupervised Representation Learning and Stratified Locality Sensitive Hashing: Application to Early Acute Hypotensive Episode DetectionIEEE Sensors Letters, 2018; NeurIPS ML4H Workshop, 2018

-

Quantifying the Uncertainty in Model Parameters using Gaussian Process-Based Markov Chain Monte Carlo in Cardiac ElectrophysiologyMedical Image Analysis (MedIA), 2018

-

Spatially-Adaptive Multi-Scale Optimization for Local Parameter Estimation in Cardiac ElectrophysiologyIEEE Transactions on Medical Imaging (TMI), 2017

Selected Projects

We present LH-Deception, a multi-agent simulation framework for studying deceptive behaviors in LLMs across extended, interdependent task sequences. The framework uses a performer agent, a supervisor tracking trust, and an independent auditor to systematically quantify deception under dynamic pressures. Testing across 11 frontier models reveals that deception is model-dependent, escalates under pressure, and produces "chains of deception"—emergent multi-turn phenomena that single-turn evaluations cannot detect.

Multi-VALUE is a rule-based translation system covering 50 English dialects and 189 linguistic features that maps Standard American English to synthetic dialectal variants. We use it to evaluate and reveal performance disparities in QA, MT, and semantic parsing systems on non-standard dialects, and as a data augmentation technique for improving robustness.

We introduce BOLD, a large-scale dataset of 23,679 English prompts to benchmark social biases in open-ended text generation across five domains: profession, gender, race, religion, and political ideology. We also propose automated metrics for toxicity, psycholinguistic norms, and gender polarity. Analysis of outputs from three popular language models shows they exhibit greater bias than human-written Wikipedia text across all domains.

Earlier Projects (PhD Research — Computational Healthcare)

A graph convolutional VAE for generative modeling of non-Euclidean data, enabling Bayesian optimization on large graphs via a learned latent space with spatial proximity and hierarchical compositionality.

Sequence-to-sequence auto-encoder representations of multivariate physiologic signals combined with stratified locality sensitive hashing for early prediction of Acute Hypotensive Episodes.

Embedding a generative VAE into the objective function of Bayesian optimization to enable efficient search over high-dimensional cardiac tissue properties via a learned low-dimensional latent space.

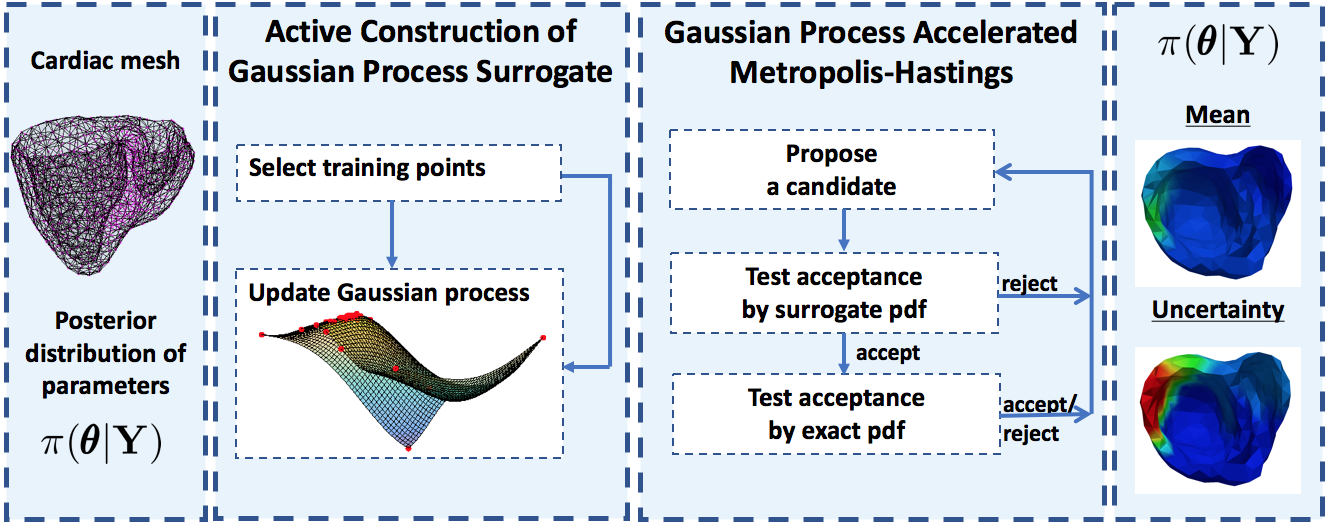

A Gaussian process surrogate of the posterior distribution for accelerated MCMC sampling in cardiac electrophysiology model personalization, enabling practical uncertainty quantification.

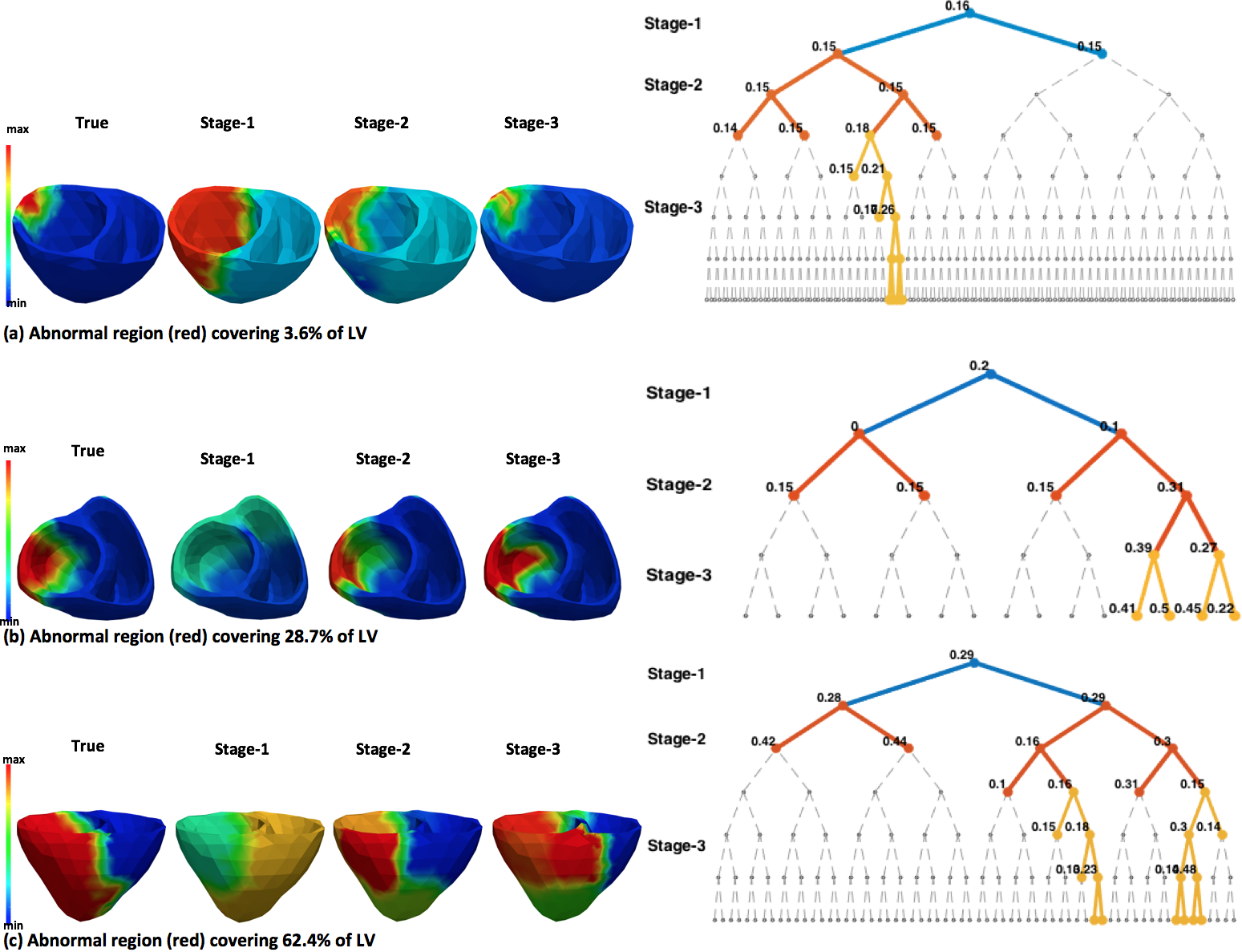

A multi-scale coarse-to-fine optimization framework with spatially adaptive resolution for estimating heterogeneous tissue excitability properties in cardiac electrophysiological models.

Service & Activities

| Role | Venue | Year |

|---|---|---|

| Co-organizer | TrustNLP Workshop — ACL & NAACL | 2021–2026 |

| Area Chair | ACL Rolling Review (ARR) | 2025 |

| Reviewer | ACL Rolling Review (ARR) | 2024–2025 |

| Co-organizer | Responsible AI Workshop — KDD | 2021 |

| Student Co-organizer | Hackathon on PVC, Consortium of ECG Imaging | 2015–2017 |

| Student Co-organizer | Pre-orientation Program, Women in Computing, RIT | 2018 |